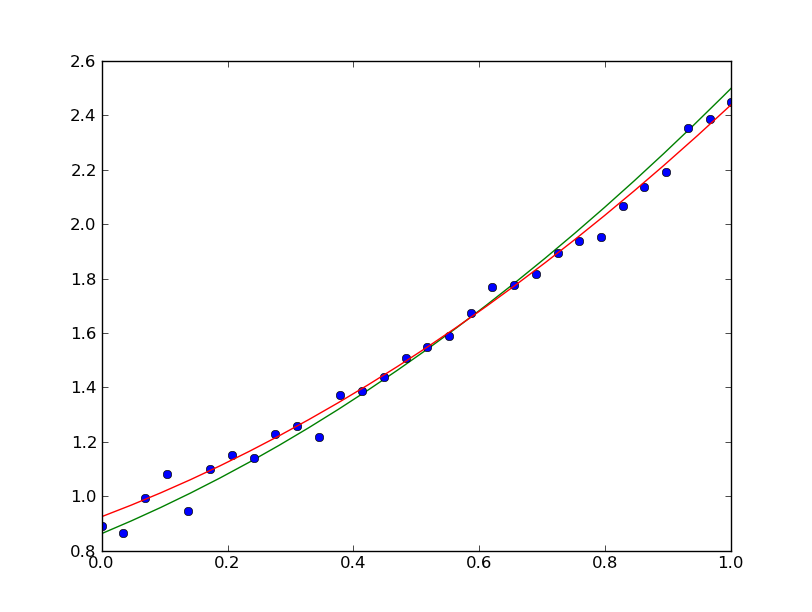

I show it for your original definition of the power law (red line in the plot below) as well as for the logarithmic data (black line in the plot below). Plt.plot(xnew, np.exp(result.values) * xnew ** (result.values), 'r')Īssuming that you have scipy 0.17 installed, you can also do the following using curve_fit. Result = minimize(decay, params, args=(lx, ly)) Params.add( 'a', value=- 0.5, min=- 1, max=- 0.001) # min, max define parameter bounds # do fit, here with leastsq model Params.add( 'lN', value=np.log( 5.5), min= 0.01, max= 100) # value is the initial value Return model - data # that's what you want to minimize # create a set of Parameters # transform data so that we can use a linear fit

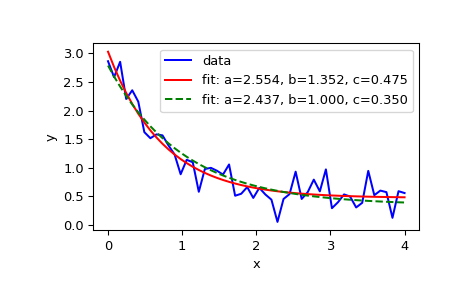

YData = Norg * xData ** (-aOrg) + np.random.normal( 0, 0.5, len(xData))

CURVE FIT SCIPY CODE

Here is the entire code with a couple of inline comments: import numpy as npįrom lmfit import minimize, Parameters, Parameter, report_fit The plot then looks as follows as you can see the fit describes the data very well: So a is pretty close to the original value and np.exp(2.35450302) gives 10.53 which is also very close to the original value. When I now use lmfit, I get the following output: ] So I first use np.log on the original data and then do the fit.

I fit them - as suggested by - by transforming the power law in a linear function: y = N * x ** a Since you do not provide any data, I created some which are shown here: Please find this solution below after the EDIT which can hopefully serve as a minimal example on how to use scipy's curve_fit with parameter bounds.Īs suggested by Weckesser, you could use lmfit to get this task done, which allows you to assign bounds to your parameters and avoids this 'ugly' if-clause. Starting with version 0.17, scipy also allows to assign bounds to your parameters directly (see documentation). In the original post, I showed a solution that uses lmfit which allows to assign bounds to your parameters. Do I need to write my own least-squares algorithm or is there something I'm doing wrong here? I can't figure out how to get a believable, let alone reliable, fit out of this routine, but I can't find any other good Python curve fitting routines. Removing the condition for a to be positive results in a worse fit, as it chooses a negative, which leads to a fit with the wrong sign slope. From fits I've put in manually, the values should land around 1e-07 and 1.2 for N and a, respectively, though putting those into curve_fit as initial parameters doesn't change the result. Using _fit yields an awful fit (green line), returning values of 1.2e+04 and 1.9e0-7 for N and a, respectively, with absolutely no intersection with the data. Where the else condition is just to force a to be positive. So, I'm trying to fit a set of data with a power law of the following kind: def f( x,N,a): # Power law fit if a > 0: